Recurrence relation

what is Recurrence relation

In mathematics, a recurrence relation is an equation that expresses the

n -th term of a sequence as a function of the k preceding terms.

examples

merge sort

sort(array A, int begin, int end):

if(begin = end)

return;

sort(A, begin , (begin + end)/2)

sort(A, ((begin + end)/2)+1, end)

pivot = (begin + end)/2

Merge(A,begin, pivot, end)

we can represent this algorithm as a recurrence relation in the following way:

this formula is the representation of #merge sort. how ever it should be mention that as learned in Asymptotic notations we can ignore any constant or round value, so lets rewrite the formula as the following:

How can we calculate a Recurrence relation

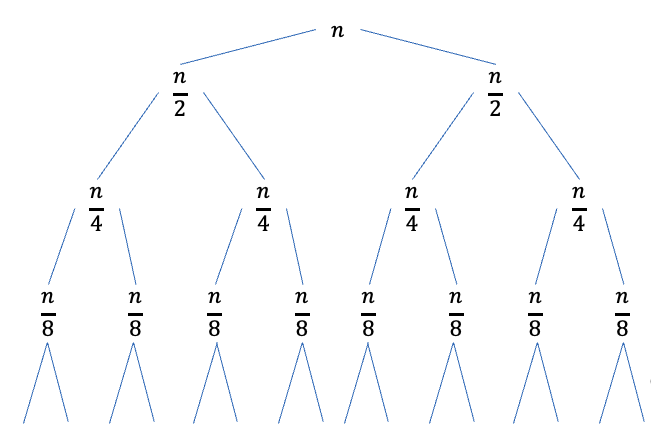

Recursion tree

lets look at the following relation :

we can calculate

this will continue until

so, lets explain whats going on:

- each level of the tree is performing

work - each branch of a level is performing

where is the branch level so in total each branch is performing - as we said this tree will expand until

there for the height of the tree will be . - now we are performing

calls where each call perform work. there fore:

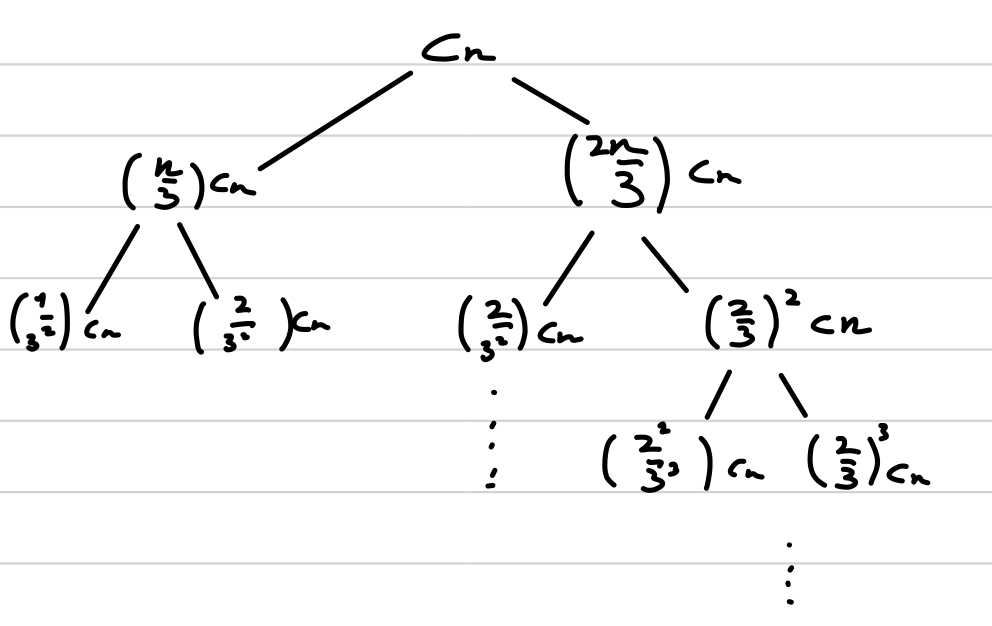

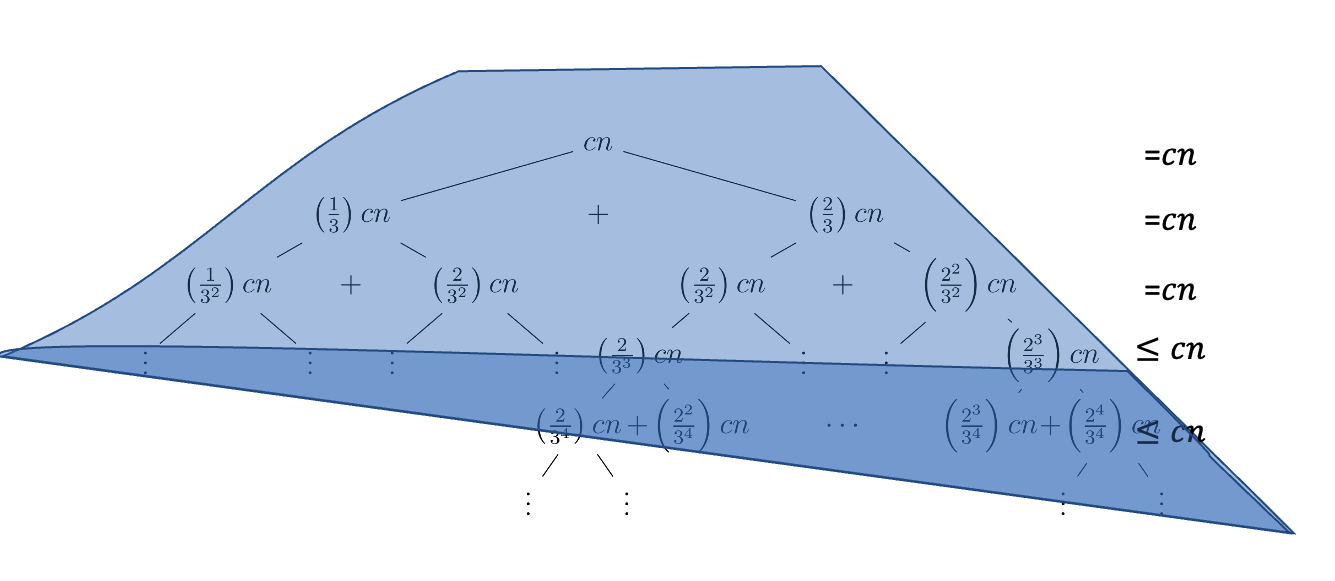

unequal Recursion tree

lets look at the next relation:

where

this time the tree is not even as the tree we saw before and it will look something like this:

each level is performing

as before the tree will end at

let's ask our self : so what if the tree is not equal? what will happen? the answer is the following. in the #Recursion tree from before, each branch performed

but that only worked because they were equal. in this case at some point the left branch will finish its work and the right one will continue his.

that means that at some branch

so how to calculate

-

we will divide the tree to 2 parts: the parts where

and the part where

the tree will look something like this:

-

now we going to calculate the maximum height of the tree in the first part and the maximum height in the second part.

-

the first part will be the best case scenario of our function and therefore will be

-

the second part will be the worst case scenario of our function and therefore will be

. -

the equal tree height will be

and the unequal tree height will be -

so as we said the first part time complexity will be

and the second part will be but we know from Asymptotic Analysis that switching between bases is performed by constant and there for the total time complexity of this function will be

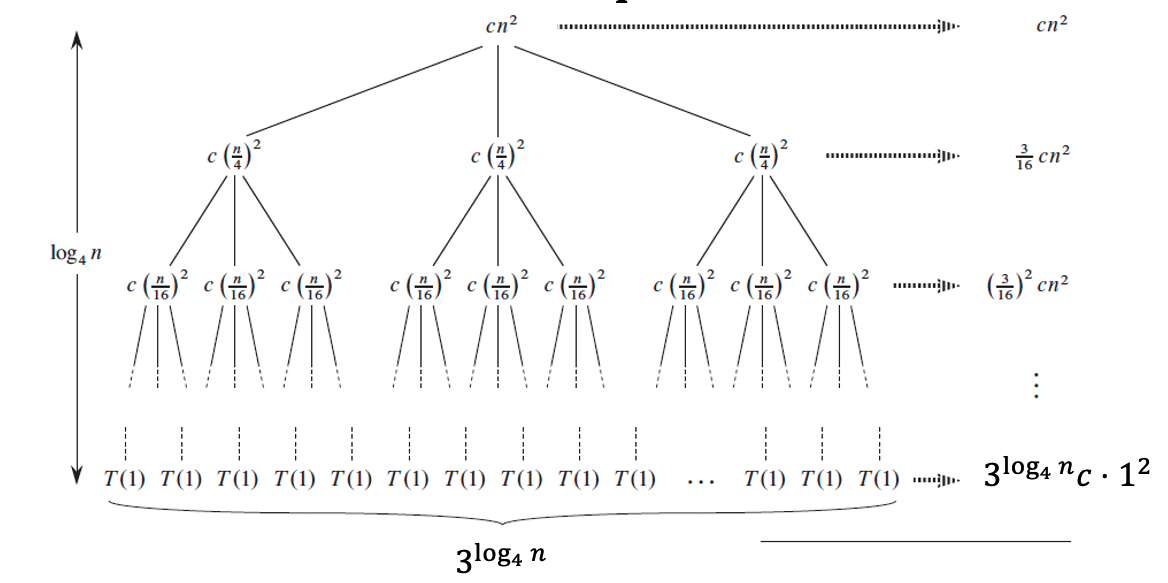

Recursion tree with more than 2 branches

lets check the following example:

where

the tree will look something like this:

lets see whats going on :

-

because the work inside the recursion is divisive each branch will do less work until

-

so we need to understand 2 things : the first one is the height of the tree so let's build a formula assuming

is the branch in the tree. and we know that

the formula will be as the following :

at each brancheach leaf will do the following job the first is 0. -

each branch will do the sum of that that will calculate to:

-

if we sum all the floors we will get :

-

this is a Geometric progression where

and therefore it will sum up to a constant. -

the best case scenario is

because that the job the first branch is doing -

because the sum is the worst case scenario and the first work in the best and they are both of

the Asymptotic notations of this formula will be :

Iterative method

in this method we will "open" the formula to get an idea if what will happen at the end. (we need to now the value of

example 1

lets open the formula:

lets see how its sums up in

if we try to build it until the end we will get :

notice that

how ever, this is not a valid proof and we need to proof it with # Inductive reasoning

- base-

we will get as needed. - step- assume that

and we will prove for

we will use full induction most of the times

continue develop the expression and we will get the above result.

because we know that

now assume

positioning method

we will guess what is the big

- lets assume that

which means that - proof you assumption with inductive reasoning

- do the same for

if possible. - try the same for a smaller big

and

the Master theorem

the master theorem help us solve a specific type of Recurrence relation but is the fastest way from all the above.

for formulas that look like the following:

where

the base for the master theorem is simple find out who from the root or the recursion part does more work.

we can achieve this with the following rules:

case 1:

this is the case where the recursive part works harder than the root

case 2:

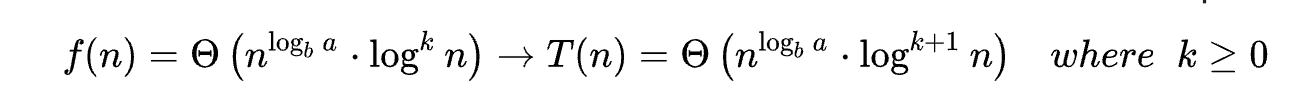

this is the case when both the recursive part and the root does the same work

there is an extended case for that one :

case 3:

this case is when the root does more work than the recursive part.

examples:

therefore:

therefore:

switching variables method

lets look at the following relation:

this can be a hard expression to develop using the methods above..

we should try make it easier by switching variables:

mark

this is a recurrence relation we already know

therefore: